- VOX

- Technical Blogs

- Availability

- Easy, Repeatable InfoScale Installation and Config...

Easy, Repeatable InfoScale Installation and Configuration with Ansible

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Automating repeatable tasks such as installation and configuration is a smart way to reduce complexity and save time. With InfoScale, you can easily do this with Ansible1—a configuration management and automation tool widely used to reduce the complexity of managing a datacenter. My colleague Vineet Mishra wrote an introductory blog post on Ansible which you can find here2.

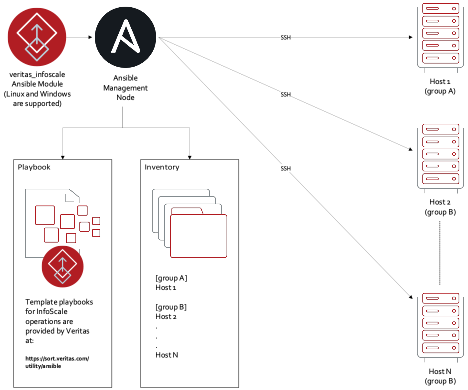

Compared to other configuration management and automation tools like Chef and Puppet, Ansible does not require agents. It operates primarily by conducting operations from the central “Control Node” to target nodes via SSH. Ansible is flexible and extensible, supporting a wide array of configuration tasks while also enabling third parties to write modules to support unique applications, such as the installation and configuration of InfoScale.

The Veritas InfoScale module for Ansible provides an interface to Ansible to perform InfoScale related operations. InfoScale related operations for Ansible are specified as "playbooks." When an Ansible "playbook" is run the desired operations are executed via SSH on the target nodes (specified in an Ansible-specific object known as an “inventory”).

See Figure 1 for a block diagram showing how the InfoScale module and InfoScale specific playbooks interact with Ansible. Example playbooks can be found here3.

The following is a series of recorded demonstrations showing the installation and configuration of InfoScale on three RHEL8 VMware Virtual Machine Guests.

- VM deployment with Ansible.

- Operating System Pre-requisites Installation.

- InfoScale Installation.

- InfoScale Cluster Configuration using the Storage Foundation Cluster File System High Availability Playbook.

- Disk Group creation.

- Cluster File System creation.

I have broken up the demonstration into the steps above for the sake of clarity. In practice, all steps can be executed in a single Ansible “play”.

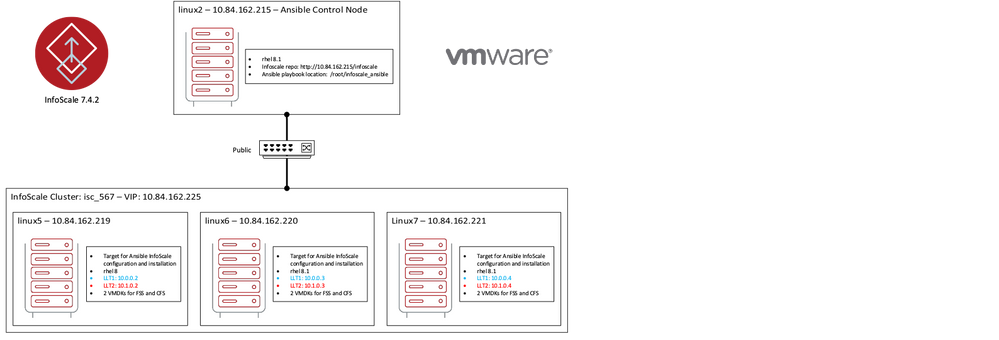

The topology for the demonstration includes the following components:

- vSphere 6.x

- 1 control node – RHEL8

- 3 InfoScale cluster nodes – RHEL8

- Ansible 1.9.x

- Python 2.7.x

- InfoScale 7.4.2

- 1 public network

- 2 private networks for InfoScale heartbeat (LLT)

- 2 data VMDKs per VM

Figure 2 displays the topology.

VM Deployment with Ansible

The first step in this demonstration is to deploy the required VMs to build the InfoScale cluster. Deployment is performed with Ansible by cloning virtual machines from a VM template, while also configuring them with required IP addresses, connecting data disks, and starting them up. For more information on deploying VMs with Ansible and VMware, please see the Ansible VMware Guide6.

Operating Systems Pre-requisites Installation

This Ansible task installs libraries and pre-requisite software required by InfoScale. In particular, I have included a task to set GRUB to boot the 4.18.0-147 kernel as it is qualified for InfoScale 7.4.2. As well, this playbook adds internal Veritas Redhat repositories for updating and installing software.

InfoScale Installation

Up to this point, no InfoScale specific tasks have been performed. In this task, InfoScale software is installed on the three RHEL8 VMs, but InfoScale is not configured.

InfoScale Configuration

Storage Foundation Cluster File System High Availability (SFCFSHA) Configuration creates an InfoScale cluster from the VMs prepared in the previous Ansible tasks.

Disk Group Creation

Each node of the InfoScale cluster is configured with two VMDK disk devices. These devices are shared with Flexible Storage Sharing (FSS) so that a shared disk infrastructure can be used to create clustered storage.

You may notice that only one node affects a change. The operation to share disks with FSS and create the required disk group only needs to be executed from one node.

Cluster File System Creation

Finally, a Cluster File System is created.

Summary

All of these demos combined took about 25 minutes. If this were a 4 node or a 5 node, or any n-node cluster, the duration would still just be about 25 minutes since all the time-intensive operations (including InfoScale Installation) are performed in parallel by Ansible.

For more information on InfoScale, please take a look at the following documents.

- Veritas InfoScale™ Enterprise: Managing Mission-Critical Applications in a software Defined Data Cen...

- Using Public Clouds for high-performance enterprise applications

Please browse the InfoScale technical library for additional InfoScale resources.

My example playbooks are available here: https://github.com/VeritasOS/infoscale_ansible

[2] https://vox.veritas.com/t5/Software-Defined-Storage/How-to-easily-deploy-and-configure-Infoscale-usi...

[3] https://sort.veritas.com/utility/ansible

[4] https://sort.veritas.com/utility/ansible

[5] https://sort.veritas.com/public/infoscale/ansible/linux/docs/Infoscale7.4.2_Ansible_Support_Linux_Gu...

[6] https://docs.ansible.com/ansible/latest/scenario_guides/guide_vmware.html

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Backup Exec is an Easy Install, 4 Things you Ought to Know in Backup Exec

- Illuminate Security Visibility with Veritas NetBackup Flex Appliance Security Meter in Protection

- Configure S3-Compatible Cloud Storage with Backup Exec in Backup Exec

- Containerising Oracle with InfoScale in a Red Hat OpenShift environment in Availability

- SAP-Certified InfoScale HA Solution with Advanced Capabilities in Availability