- VOX

- Technical Blogs

- Backup Exec

- Worried about your backup windows? We strive to ke...

Worried about your backup windows? We strive to keep them intact

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The backup window is primarily dependent on the size of the data being protected. While the rapid growth of data increases the backup window, it becomes crucial to ensure that the backup performance is not impacted due to product issues.

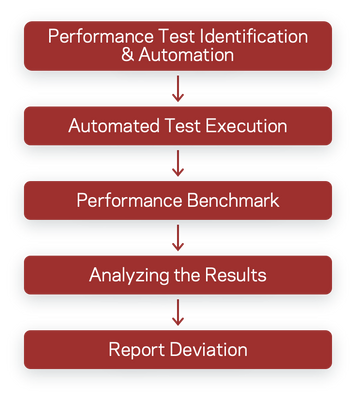

Periodic performance testing helps to identify and investigate the issues timely. Even a small change in the product code can impact the backup throughput to a large extent. Automation enables to accommodate multiple performance test iterations on incremental builds during the development phase.

How we do it:

For Backup Exec, we complete performance testing for existing features, as well as new features. Manually doing performance tests is time-consuming and cannot be done on daily builds. Automation helps in a big way; we run a set of regression test cases from various features on various devices and record the readings of backup job time. If a deviation is found compared to benchmark values, the automation test will report an issue.

We identified a set of test cases to run from various Backup Exec features like Files system, Virtual (VMware & Hyper-V), and Exchange database with different device combinations like Disk, Tape, Dedupe, and Cloud. For each feature Full and Incremental backup job is executed several times, and record the average backup job time. We then compare this backup time with the benchmark value of the backup job time for a previously released product version.

We have written an automation framework and automated various feature regression test cases. Each feature test case runs a full backup before each incremental backup data is populated. After the backup, all the job details (job name, storage device, start time, end time, job completion time, etc.) are captured and posted to our performance reporting infrastructure consisting of a front-end web server and back-end SQL database. A set of SQL stored procedures processes the captured data and feeds front end web server for display.

Performance hardware setup:

There are dedicated servers for each feature performance testing. A dedicated network connects the Backup Exec server to the remote server, and each Backup Exec media server is running on a separate datastore. This arrangement ensures that no other entity interferes with performance setup hardware, and we get the benchmark reading under standard conditions. The backup job is targeted to a combination of backup devices viz—disk, tape, deduplication device, cloud, etc.

Measuring criteria and reporting:

Run a full backup and incremental backup and record the time taken to complete the backup. The full backup job is run three times to calculate the average backup job time; then incremental backup job runs five times to calculate the average time. We then compare this value with the previous release's benchmark value, usually an n-1 release. In case of a performance reduction, issues are reported with a severity level depending on the deviation observed.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Veritas takes virtual to the next level with NetBackup 8.3 in Protection

- Backup Exec 2014 is Now Available! in Backup Exec

- Netbackup tricks and commands in Enterprise Data Services Community Blog

- Warning bpbrm(pid=xxxx) from client backup: WRN - 'Server' service needs to be restarted for share to take effect: PATH in Enterprise Data Services Community Blog