- VOX

- Availability

- Cluster Server

- Disk group fails after implementing disk group fen...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-27-2015 09:28 AM

Hi,

I have a laboratory scenario with two virtual machines. Those virtual machines have installed SFHA 6.2 suite. Version of RHEL is 6.5.

I firstly installed a failover cluster of oracle database. Binaries are installed localy and the database files in a separated vmware disk. This disk is managed by the vmware agent.

The group of services are the following ones:

Vmware disk->disk group-->volume-->mount-->oracle database SID-->listener

Before installing the fencing with three disks using RDM, the cluster was working perfectly.

The volume where the oracle SID is installed in a vmdk disk separately is manged by SF and the volume is mounted using vxfs.

The problem is that know, when I try to online the resource group the diskgroup agent fails.

I would like to be updated with any idea before posting logs...

Thanks

Solved! Go to Solution.

- Labels:

-

Agents

-

business continuity

-

Cluster Server

-

Linux

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-30-2015 10:31 PM

The choice depends on many factors

- What level of reliability is required?

- How much cost?

- Manageability, etc

If you require high level of data protection and reliability and you have SCSI-3 PGR supporting array devices than choice no 3 would be the best.

If you dont have reliable network and require sufficent reliability but are not much concerned about data proctection than choice 1 would be good.

If you have reliable network and need easier manageability of cordiantion ponts than choice 2 would be good.

I am not sure i have confused you again, but we have seen cluster server in production with the different options. It depends on what each customer feels best for his requirements.

Regards,

Sudhir

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2015 12:52 AM

Your Oracle diskgroup volume is probably not SCSI-3 compliant.

MonitorReservation:

If the value is 1 and SCSI-3 fencing is used, the agent monitors the SCSI reservation on the disk group. If the reservation is missing, the monitor agent function takes the service group containing the resource offline.

Type and dimension: boolean-scalar

Default: 0

|

Note: |

If the MonitorReservation attribute is set to 0, the value of the cluster-wide attribute UseFence is set to SCSI3, and the disk group is imported without SCSI reservation, then the monitor agent function takes the service group containing the resource offline. |

Please also see the pdf under 'Attachments' in this TN:

About I/O fencing for VCS in virtual machines that do not support SCSI-3 PR

http://www.symantec.com/docs/TECH130785

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2015 02:34 AM

If the diskgroup is created using virtual disks and not Physical RDM's and the cluster has use UseFence=SCSI3 set than the diskgroup will be imported with SCSI3 reservations and the import will fail.

You need to use disks that are SCSI3 compliant and may need to be provide to the VM as physical RDMs.

Else set the DiskGroup resource attribute Reservation = NONE. This will import the diskgroup without reservation. But using such a configuration is not recommended as this would expose the diskgroup to data corruption issues.

Rservation

Determines if you want to enable SCSI-3 reservation.This attribute can have one of the following three

values:

- ClusterDefault.The disk group is imported with SCSI-3 reservation if the value of the cluster-level UseFence attribute is SCSI3. If the value of the cluster-level UseFence attribute is NONE, the disk group is imported without reservation.

- SCSI3.The disk group is imported with SCSI-3 reservation if the value of the cluster-level UseFence attribute is SCSI3.

- NONE.The disk group is imported without SCSI-3 reservation.

- To import a disk group with SCSI-3 reservations, ensure that the disks of the disk group are SCSI-3 persistent reservation (PR) compliant.

- Type and dimension: string-scalar

- Default: ClusterDefault

- Example: "SCSI3"

Also to know if the disks are SCSI3 compliant you can use the vxfentsthdw utility.

https://sort.symantec.com/public/documents/vcs/6.0/linux/productguides/html/vcs_admin/ch10s02s05.htm

Also provide the DiskGroup nad Engine logs for exact problem.

Regards,

Sudhir

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2015 03:45 AM

Dear all

There are two disk groups:

- Oradg-->for oracle database files. This group is using native vmware vmdk filesystem. This disk does not support SCSI-3 reservation.

- Orafence-->for coordination disks for fencing. Uses RDM. Before configuring the fencing method with ./installvcs62 -fencing option I checked with vxfentsthdw utilitiy that the 3 disks were SCIS-3 compliant.

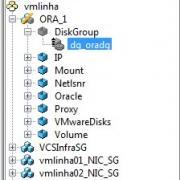

Here some pictures and information about the diskgroups.

[root@vmlinha01 ~]# vxdisk -eo alldgs list

DEVICE TYPE DISK GROUP STATUS OS_NATIVE_NAME ATTR

vmdk0_0 auto:LVM - - online invalid sda -

vmdk0_1 auto:cdsdisk oradg01 oradg online dgdisabled sde -

3pardata0_33 auto:cdsdisk - (fendg) online thinrclm sdb tprclm

3pardata0_34 auto:cdsdisk - (fendg) online thinrclm sdc tprclm

3pardata0_35 auto:cdsdisk - (fendg) online thinrclm sdd tprclm

[root@vmlinha02 ~]# vxdisk -eo alldgs list

DEVICE TYPE DISK GROUP STATUS OS_NATIVE_NAME ATTR

vmdk0_0 auto:LVM - - online invalid sda -

vmdk0_1 auto - - error sde -

3pardata0_33 auto:cdsdisk - (fendg) online thinrclm sdb tprclm

3pardata0_34 auto:cdsdisk - (fendg) online thinrclm sdc tprclm

3pardata0_35 auto:cdsdisk - (fendg) online thinrclm sdd tprclm

Do you mean that the fact of implementing this method of fencing in order to avoid split brain situations, affects to the resource group ORA_1, where the diskgroup oradg is configured?

If so, then the only way to avoid this situaion would be to remove the SCSI-3 reservation disks of the group orafendg and implemente Coordination Point Server fencing method?

Thansk for your support.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2015 11:12 PM

You could still use the disk based fencing for cluster arbitration but then you would have to set the diskgroup attribute Reservation = NONE for the Oracle diskgroup resource. This would ensure that the diskgroup resource is imported without SCSI3 reservations.

The above configuration will provide the same benefits as using CPS server for fencing.

Fencing overall is used to provide cluster arbitration during a split-brain and in addition it can be used to provide data protection to the diskgroups.

Using non-SCSI3 PGR compliant disks for data diskgroups can expose it to data corruption in some cases. These would hold true even when using CP server based fencing mechanism.

https://sort.symantec.com/public/documents/sf/5.1/aix/html/vcs_admin/ch_vcs_communications17.html

Regards,

Sudhir

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-30-2015 02:49 AM

Hi,

Really appreciate your help. I was surroning this problem and I also found this tutorial: https://www-secure.symantec.com/connect/articles/storage-foundation-cluster-file-system-ha-vmware-vmdk-deployment-guide

And now the questsion of the 1 million dollar. Which scenario would you use for a PRODUCTION environment:

- Failover cluster using native vmdk for the oracle data but setting the flag Reservation=NONE and with RDM coordination disks for the fencing.

- Failover cluster using native vmdk for the oracle data but using Coordination point server as fencing method.

- Failover cluster using RDM for all the disks data and fencing as long as they accept SCSI-3 reservation

Thanls

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-30-2015 10:31 PM

The choice depends on many factors

- What level of reliability is required?

- How much cost?

- Manageability, etc

If you require high level of data protection and reliability and you have SCSI-3 PGR supporting array devices than choice no 3 would be the best.

If you dont have reliable network and require sufficent reliability but are not much concerned about data proctection than choice 1 would be good.

If you have reliable network and need easier manageability of cordiantion ponts than choice 2 would be good.

I am not sure i have confused you again, but we have seen cluster server in production with the different options. It depends on what each customer feels best for his requirements.

Regards,

Sudhir