- VOX

- Data Protection

- NetBackup

- Restore Operation

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Restore Operation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-28-2021 06:39 AM

Hello,

I am attempting to restore several very large backup jobs and are encountering very slow response times which I can summarise into two categories:

1. "Assembling file list" - This is pre operation that takes place before the data is recovered. One job running now is 4.5million files, at 1.5 million file it has taken 70hours so far. No other backup jobs are running on this server anymore.

2. Slow Data - The data is being recovered from an S3 bucket, I noticed a 30mb limit configured which I removed and improved the speed however, there is no multiple streams so when restoring thousands or smaller files it takes a very very long time as it appears to do them all one at a time.

Any advice would be fantastic - many thanks!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-28-2021 07:04 AM - edited 06-28-2021 07:14 AM

This is more general restore advice.

Restoring e.g 1 TB of small files will take much longer than restoring e.g 1 TB of Exchange data files.

This is due to the overhead of creating files in the operating system.

So what to do :

- Make sure antivirus doesn't scan the restored files

- Restore in multiple datastreams (for disk based backups). Instead of restoring e.g 1 TB in one job, do a partial restore where the restore is split in e.g 4-6 streams. I assume this will help on the "Assembling file list" waiting time.

- Memory - make sure client has enough memory, giant file list may consume all available memory causing paging.

- Accept - you can't bet the law of physics :)

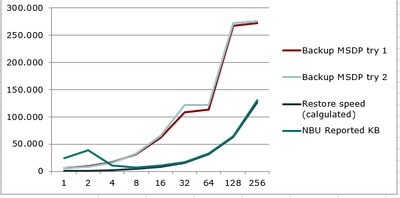

Here is graph I did some years ago, who show restore speed and file size. Your mileage may vary, but it do show there is link between the two.

X axis - file size

Y axis - number of files

Hope this helps

Best Regards

Nicolai

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-28-2021 10:29 PM

Many thanks for taking the time to explain Nicolai!

I agree a single VMDK file will be faster than 4.5million 10KB .XML files :)

Lots of memory in the client and Netbackup master server, but never exceeds 9GB of 100GB RAM.

The project I am involved in is to restore ALL netbackup data to be ingested into our new backup product, our method is restore to a scratch location and it's then picked up by the other new backup app. The restores are slow hence my inquiry.

Are there any typical configuration tweaks I could make that would also help?

Many thanks

- installing the NetBackup Kubernetes Operator failing in NetBackup

- BMR Restore won't start "Failed to verify backup--rc(1002) in NetBackup

- Final error: 0xe00095a7 - The operation failed because the vCenter or ESX server reported that the in Backup Exec

- Duplication to tape via BYO media server opinion. in NetBackup Appliance

- How Do I Backup and Restore My AI Database? A Look Into ChromaDB and AI/LLM Databases in NetBackup