- VOX

- Data Protection

- NetBackup

- Re: The doubt about the OPR status under the vmopr...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

The doubt about the OPR status under the vmoprcmd devmon output

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-30-2022 05:48 AM

Although it might be an ancient or classic "problem" about TDs status, we do sometimes can find the OPR status of some TDs under the vmoprcmd devmon inexplicably.

So my question is what ever the OPR status means about... ?

# vmoprcmd -devmon ds|grep jcsjdb3

jcsjdb3 /dev/nst6 TLD

jcsjdb3 /dev/nst7 TLD

jcsjdb3 /dev/nst8 TLD

jcsjdb3 /dev/nst10 TLD

jcsjdb3 /dev/nst11 TLD

jcsjdb3 /dev/nst9 ACTIVE

jcsjdb3 /dev/nst0 TLD

jcsjdb3 /dev/nst3 TLD

jcsjdb3 /dev/nst5 TLD

jcsjdb3 /dev/nst4 TLD

jcsjdb3 /dev/nst2 OPR

jcsjdb3 /dev/nst1 TLD

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-30-2022 06:18 AM - edited 06-30-2022 06:26 AM

OPR means that the drive is in "operator" control. You can load and unload tapes by hand.

If the drive is a standalone drive, it is the correct status

If the drive is part of a library, then there is a problem with the library or with netbackup configuration.

You can start a drive with OPR mode, if you want to use this drive manually, whatever the reason

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-30-2022 09:00 AM

Could it be more details for such scenario with some problematic TL or wrong configuration .... ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-30-2022 09:15 PM

Such issues resolves after a complete power cycle of Tape Library or you would end up working with Tape Library vendor.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-01-2022 05:28 AM

1) Does it mean that there is no other way to reset the OPR status from the NBU layer ?

2) What is the difference between the OPR and AVR status under the robotic controlled ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-01-2022 03:13 PM

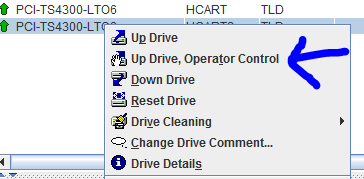

Set the drive down, and then up ... it should come out of OPR mode.

AVR is different - if a drive is standalone / not in a robot it should be in AVR mode.

If a drive is robotic, it should be in TLD or ACS mode, depending on the robot type. If a TLD drive goes to AVR mode, and, that drive is on the robot control host then it's almost certain that the connection to the library has been lost, any other drives in that robot will also show as AVR. Any shared drives on 'remote' media servers will also show AVR.

If a shared drive on a media server, where the media server is NOT the robot control hosts goes to AVR, but the same drives are still TLD on the robot control host itself, then there is a comms issue between the remote media server and the RCH - specifically, tldcd on the remote media cannot communicate with tldcd on the RCH.

ACS is a bit different, as there is no robot control host, instead each media server communicates to the acs server which is 3rd party (Oracle), and takes the place of a robot control host. Here, if drives that were ACS goto AVR, then communication has been lost to the ACS server and multiple media servers may or may not be affected depending on the cause.

There are other reasons robotic drives can goto AVR mode, but the the above is by far the most common.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-01-2022 10:28 PM

It is a bit strange that neither restart the ltid nor directly up the TD can eliminate the OPR status.

Or maybe it should firstly down the OPR TD ... ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-02-2022 09:49 AM

Hmm that is a bit odd - you don't see OPR statue very often, and what I suggested has worked for me in the past.

It's not a NetBackup config issue as far as I can see as nothing has changed here - you do get the odd issue where everything looks good, but deleting and reconfiguring doe resolve an issue, but I don't think we are at that point.

Can you power cycle the drive, a proper power cycle, not a 'reset' from the library console.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-03-2022 02:57 AM - edited 07-03-2022 03:25 AM

The most pivotal problem is why sometimes one TD under the same good robotic control would inexplicably become OPR status.

In other words, what the trigger condition should be, so that the ltid process would automatically put the TD status into OPR ... ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-03-2022 02:45 PM - edited 07-03-2022 02:47 PM

I don’t actually know what could cause a drive to go Opr aside of it being set in the GUI manually - I really haven’t seen it often.

Can you confirm if it’s always the same drive ?

However, let me see what I can find out.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-03-2022 04:36 PM

Not always the same ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-04-2022 07:28 AM

Lets see if we can get something from logs:

Create the reqlib log dir:

/usr/openv/volmgr/debug/reqlib

Add the word VERBOSE into /usr/openv/volmgr/vm.conf

Stop and restart ltid (/usr/openv/volmgr/bin/stopltid, wait a few moments and then /usr/openv/volmgr/bin/ltid -v )

/usr/openv/volmgr/debug/reqlib/<logfile> should contain content ...

Set any drives in opr statues down:

/usr/openv/volmgr/bin/volmgr -down <drive instance number>

(The drive instance number is the number to the left of the drive name as seen in tpconfig -d )

Set drive back up using command line:

/usr/openv/volmgr/bin/volmgr -up <drive instance number>

Wait for one of the drives to goto opr status, collect the reqlib log for that day,

Look in the log for the string: upopr

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-05-2022 06:16 AM

Here is the complete volmgr debug logs for this OPR issue....

But it seems that there is almost no any significant information ...

18:02:26.313 [32708] <2> vnet_same_host_and_update: [vnet_addrinfo.c:3021] Comparing name1:[jcbak] name2:[jcbak]

18:02:26.313 [32708] <4> get_emm_server_info: EMM server = jcbak; EMM port = 1556

18:02:26.335 [32708] <2> get_master_server_name: Master server name = jcbak

18:02:46.610 [471] <4> vmoprcmd: INITIATING

18:02:46.636 [471] <2> vmoprcmd: argv[0] = vmoprcmd

18:02:46.636 [471] <2> vmoprcmd: argv[1] = -h

18:02:46.636 [471] <2> vmoprcmd: argv[2] = jcsjdb3

18:02:46.636 [471] <2> vmoprcmd: argv[3] = -up

18:02:46.636 [471] <2> vmoprcmd: argv[4] = 9

18:02:46.636 [471] <2> vmdb_start_oprd: received request to start oprd, nosig = yes

18:02:46.636 [471] <2> vnet_same_host_and_update: [vnet_addrinfo.c:3021] Comparing name1:[jcsjdb3] name2:[localhost]

18:02:46.637 [471] <2> vnet_sortaddrs: [vnet_addrinfo.c:4170] sorted addrs: 1 0x1

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-05-2022 03:17 PM

OK, I think the best way forward on this, now the logs are in place is to set a cronjob that runs vmoprcmd to a file every 30 mins. eg vmoprcmd >/tmp/vm_out_$(date '+%Y-%m-%d_%H:%M').txt

Then, when you notice a drive in OPR, you can see within 30 mins when this changed, and thus get the relevant log file, and the vmoprcmd output file + the one before the change.

I ran a quick test today, and looking on a VTL when I set the drive manually to OPR, no scsi commands were sent that I could see, so it's totally a NetBackup 'thing', not hardware (I think).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-05-2022 04:50 PM

Surely, it must be a logical status of TDs only in the NBU layer !

BTW, no any significant information from my above attachment of the voldbg logs ...... ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-06-2022 03:52 PM

Hence, by logging vmoprcmd output to a file where the file and contains the date/ time run say every 30 mins you can capture the drive ‘changing’ from TLD to OPR, then with the accompanying log we can see if it shows anything.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-06-2022 09:17 PM

I think that the corresponding developers at the backline should know about this mechanism ......

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-07-2022 05:57 AM

18:02:46.812 [9948] <4> oprd: INITIATING

18:02:46.812 [9948] <2> mm_getnodename: cached_hostname jcsjdb3, cached_method 3

18:02:46.818 [9948] <2> mm_getnodename: (3) hostname jcsjdb3 (from mm_master_config.mm_server_name)

18:02:46.818 [9948] <2> oprd: got CONTINUE, connecting to ltid

18:02:47.594 [9948] <2> vnet_pbxConnect_ex: pbxConnectExEx Succeeded

18:02:47.654 [9948] <8> do_pbx_service: [vnet_connect.c:2581] via PBX VNETD CONNECT FROM 10.131.29.105.29439 TO 10.131.33.60.1556 fd = 9

18:02:47.655 [9948] <8> vnet_vnetd_get_security_info: [vnet_vnetd.c:2823] VN_REQUEST_GET_SECURITY_INFO 9 0x9

18:02:47.674 [9948] <8> vnet_vnetd_disconnect: [vnet_vnetd.c:201] VN_REQUEST_DISCONNECT 1 0x1

18:02:47.679 [9948] <2> process_requests: 2 9 -1 *NULL*

18:02:47.680 [9948] <2> process_requests: oprd received string 2 9 -1 *NULL*

18:02:47.680 [9948] <4> fix_serial_number: initiating with drive index 9

18:02:47.680 [9948] <4> fix_serial_number: drive index 9 is UP - cannot be swapped <<< Here what this means about ...... ?

18:02:48.636 [9948] <2> process_requests: TERMINATE

18:02:48.636 [9948] <2> process_requests: received TERMINATE request

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-07-2022 03:53 PM

18:02:47.680 [9948] <4> fix_serial_number: drive index 9 is UP - cannot be swapped <<< Here what this means about ...... ?

NBU tracks drives via serial number, this to me just looks like it is confirming the serial number for the drive. It's a level <4> log line, so not of any concern.

What we need is the details as I previously requested - vmoprcmd run to a dated file from cron (every 30 mins is fine) and that way we can see (within 30 mins) when the drive shows OPR, and then the corresponding logs for before, during and after that time.

- The doubt about the OPR status under the vmoprcmd devmon output in NetBackup

- Issue while configuring tape drive robot on clustered master server in NetBackup

- How to realize some concepts about the vmoprcmd output in NetBackup

- The suddenly and inexplicably RESTART status with all the TDs of some media servers! in NetBackup

- Restore not able to start due to Drives are in use. in NetBackup