- VOX

- Data Protection

- NetBackup

- which process uses these directories y how to cont...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

which process uses these directories y how to control its size?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 09:30 AM

Hi people:

I'm hunting down the responsible process that are using 52 GB on /usr/openv/logs/nbrntd and 220 GB on /usr/openv/var/global/telemetry

:/usr/openv/logs# du -sh *

20K bmrd

1K bmrs

1K bmrsetup

4.8M nbars

4.3M nbatd

404K nbaudit

923M nbdisco

250M nbemm

337K nbevtmgr

5K nbftclnt

346K nbim

246M nbjm

45K nbkms

25M nbpem

43M nbpxyhelper

309M nbrb

22M nbrmms

52G nbrntd

80M nbsl

34M nbstserv

2.8G nbsvcmon

30K nbvault

35K nbwebservice

1K ncfnbbrowse

[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[[

:/usr/openv/var/global/telemetry# du -sh *

1K dataset

1K hostuuid

220G nb_runtime

1.2M scheduling

4.3M systemstats

4.3M tmp

228K upload_cache

Some background data:

Physical host with Solaris 11.3 for X86, 128 GB RAM, one CPU. Currently, running NBU 8.1 in service for 10 years (migrated from Sparc T2 server with older version of NBU)

I used vxlogmgr -d -e "09/25/2016 00:00:00 AM" but didn't find any file to delete...

So I'm lost finding which process uses those directories and how to control its growing size. ZFS pools are impacted when they are over 90% used.

Can somebody shed me light over this ?

Thanks a lot in advance.

- Labels:

-

NetBackup

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 09:43 AM

Hey

Check out what are the current settings for nbrtnd:

vxlogcfg -l -p NB -o 387

if diagnostic or debug is set to anything else that 1 - set it to 1 with below

vxlogcfg -a -p NB -o 387 -s DiagnosticLevel=1 -s DebugLevel=1

About telemetry - what is inside this one 220G nb_runtime ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 10:31 AM

Hi quebek:

About your question on vxlogcfg -l -p NB -o 387, this is the output:

Configuration settings for originator 387, of product 51,216...

LogDirectory = /usr/openv/logs/nbrntd/

DebugLevel = 1

DiagnosticLevel = 6

DynaReloadInSec = 0

LogToStdout = False

LogToStderr = False

LogToOslog = False

RolloverMode = FileSize | LocalTime

LogRecycle = False

MaxLogFileSizeKB = 51200

RolloverPeriodInSeconds = 43200

RolloverAtLocalTime = 0:00

NumberOfLogFiles = 3

OIDNames = nbrntd

AppMsgLogging = ON

L10nLib = /usr/openv/lib/libvxexticu

L10nResource = nbrntd

L10nResourceDir = /usr/openv/resources

SyslogIdent = VRTS-NB

SyslogOpt = 0

SyslogFacility = LOG_LOCAL5

LogFilePermissions = 664

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-09-2022 11:01 AM

Hi

So run this one

vxlogcfg -a -p NB -o 387 -s DiagnosticLevel=1 -s DebugLevel=1

it will decrease the logging level for nbrtnd...

Also you can try to limit logs size by:

"About limiting the size of unified and legacy logs

To limit the size of the NetBackup logs, specify the log size in the Keep logs up to GB option in the NetBackup Administration Console. When the NetBackup log size grows up to this configuration value, the older logs are deleted. To set the log size in GB, select the check box, which lets you select the value in GB from the drop-down list."

This is from https://www.veritas.com/support/en_US/doc/86063237-127664549-0/v106233759-127664549

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-10-2022 12:55 AM

vxlogmgr -d is the preferred option, but if that isn't working go to /usr/openv/logs/nbrntd and delete the log files, it will have no system impact on Netbackup. If someone zipped a lot of old log files, vxlogmgr will not delete them.

As @quebek has already mentioned, lower the debug/diagnostic level of nbrntd

With regards to /usr/openv/var/global/telemetry, data in this area is information reported back to Veritas, according to link below. I would assume data is safe to delete, try disabling telemetry fist to be on the safe side. Alternative open a support ticket with Veritas.

https://www.veritas.com/support/en_US/doc/133778034-133778053-0/v133387180-133778053

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-15-2022 03:05 PM

Hi people, I'm apology by having being on silence these days but was waiting to have another session with the final user.

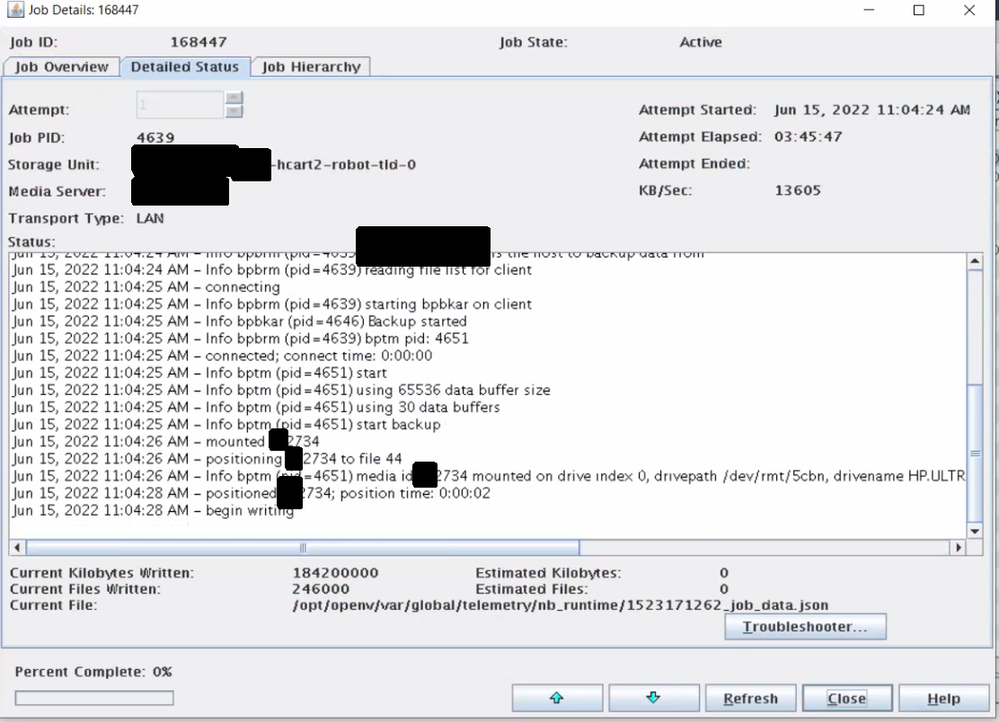

Seem that files over /usr/openv/var/global/telemetry are somehow part of the catalog backup since; they were running its catalog policy and since takes 4 hours to complete take this screenshot.

Will check the pathnames on that policy, but if was wizard generated; could be an error from the nbu software (they are using NBU 8.1; since got issues going to 9.0 so they rolled back)?.

Tomorrow with have another session but to upgrade their Solaris 11.3 to 11.4, but I will surely take time to sniff every place to figure out why is so large and the value of those files.