- VOX

- Technical Blogs

- Protection

- How NetBackup Parallel Streaming™ Meets the Needs ...

How NetBackup Parallel Streaming™ Meets the Needs of Today's Modern Workloads

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Not long ago, it was common practice for many organizations who required BI processing and data analysis to simply extract the data from their primary data stores and conduct data analysis on an as-needed basis. Once the exercise was complete, the results were typically discarded until the next occasion called for deeper BI and data analytical insight.

Today, this practice is no longer the norm for most. Big Data analytics and BI practices have seen tremendous growth in just the past few years. In fact, they are now considered standard operating procedure (SOP) for most organizations. This is largely due to the explosion of unstructured data in today’s IoT landscape, as well as the need to store and protect unstructured data using large, scale-out, multi-node environments. In reality, we’re witnessing one of the largest shifts in history in how mission-critical data is produced, stored, analyzed, and leveraged…and it’s happening before our very eyes.

An important question then becomes how are companies protecting this data now that so much of it is delivered and stored in unstructured formats? Additionally, how are companies able to scale using traditional client-server architectures given the rapid rate at which this type of data is growing? Take popular Big Data management platforms such as Cloudera with Hadoop distribution, for example. These solutions are designed to manage literally hundreds of petabytes of information, be it from on-premises environments to cloud-based services. And when we consider the rapid growth of next generation, scale-out NoSQL workloads such as HBase, MongoDB, Cassandra, and many other popular open source DBs designed specifically to store and service multi-PBs of data, a scalable solution is critical!

Unfortunately, many Backup and Recovery (B&R) vendors have chosen to retrofit traditional backup and recovery technologies in order to service Big Data environments. This means they typically require an agent or client to be installed for client-server communication and data movement. While this may work for smaller environments, you can quickly see in the graph below how this model does not scale to fit the growing needs of modern database architectures. As new data nodes are added (in the case of Hadoop below, for example) the Name Node, or Master Node, quickly becomes a bottleneck on the entire solution because all data has to be routed through a single client residing on this node. This model simply does not scale.

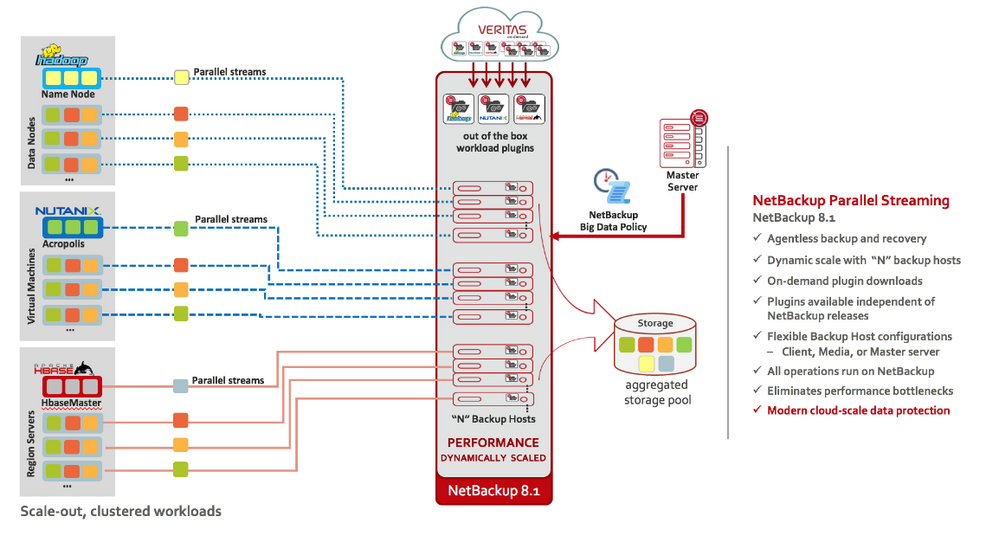

Rather than trying to force all backup and recovery data through a single client-server architecture, we designed a revolutionary new technology called NetBackup Parallel Streaming™ that complements modern, scale-out workload architectures. This new B&R technology was designed specifically after the same principles on which scale-out architectures are built. As the needs of the cluster grow, and more Data Nodes are added to increase processing power and storage capacities, NetBackup Parallel Streaming allows the user to add additional Backup Hosts in order to meet the growing needs of the cluster. In this manner, B&R scalability mirrors the needs of the cluster environment without ever having to install clients or agents on Name Node or Data Node servers.

With agentless technology, cluster upgrades and node additions do not impact the existing B&R solution because there are never any “bits” to manage or maintain on the cluster. Conversely, NetBackup Plug-ins residing on Backup Hosts are not impacted by cluster upgrades. And with agentless technology, a single Backup Host can support multiple platforms (e.g., Linux, Solaris, Windows) and multiple workloads simultaneously (e.g., Hadoop, HBase, MongoDB, Cassandra, etc.) because all of the backup and recovery processing happens remotely using modern interfaces such as REST. This alleviates problems associated with having to proliferate multiple agents and/or clients for different workloads running on different platforms.

For Hadoop, NetBackup Parallel Streaming consists of the core Parallel Streaming framework, now included in NetBackup 8.1, as well as the Hadoop Plug-in that Backup Hosts (typically NetBackup Media Servers) will use to discover, backup, and recover HDFS data. This works the same for customers using Hadoop environments natively, or for those using popular analytics platforms such as Cloudera. And because NetBackup Parallel Streaming technology is built directly into NetBackup, this means customers can take full advantage of familiar UI for policy-based administration, job scheduling, data movement, monitoring, reporting, cataloging, storage allocation, MSDP pools, and granular recovery of individual files or folders, all through the NetBackup Console.

At Veritas, we believe the best way to protect large scale-out, multi-node cluster environments is by using technologies that were designed specifically for these types of modern database workloads. Rather than retrofitting or “shoehorning” traditional single client-server technologies to service Big Data needs, we designed NetBackup Parallel Streaming™ after the very same design principles on which scale-out architectures are modeled. Visit https://www.veritas.com/product/backup-and-recovery/netbackup/modern-workload-protection to learn more about how Veritas NetBackup provides superior protection of next-generation, modern scale-out workloads.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Understand, Plan and Rehearse Ransomware Resilience series - Design to Recover in Protection

- Understand, Plan and Rehearse Ransomware Resilience series - Access and Improve in Protection

- Veritas at AWS re:Invent 2022 in Enterprise Data Services Community Blog

- Simplifying Protection for Telco Cloud Platform – Veritas NetBackup Delivers in Protection

- Streamlining Data Services to Ensure that All of Your Data is Protected in Insights