- VOX

- Technical Blogs

- Protection

- Use Amazon Web Services to Store Your Backups

Use Amazon Web Services to Store Your Backups

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

At Amazon re:Invent last year, the Veritas booth was a buzz with customers interested in our cloud management solutions. We were awarded the most engaging booth staff at re:Invent – a tremendous recognition for the entire team.

The conversations centered on migrating local workloads and data to Amazon Web Services (AWS). I had probably over a dozen conversations with customers on tape-based backup, and they all shared a common concern: "We are using tape because we've done so for many years, but the complexity and costs of storing backups on tapes and the management of it across multiple sites are becoming over burdensome and are failing to meet demands of my organization."

They were all considering AWS cloud storage to replace their tapes.

Tape hardware needs to be refreshed every seven to 10 years. These are long-term and often significant upfront investments. What are you going to do when your tape hardware comes up for a refresh? Can you commit to another ten years?

Ten years is a long time. Moving your backup images to AWS could present you an excellent alternative from not only a cost perspective but also from an organization's agility perspective – leapfrogging your data protection from the "dinosaur-era" to what I call today's "instant era." AWS cloud storage has the following advantages versus backing up to tape:

- Backing up to AWS is automated, whereas tape often is labor-intensive and more prone to human errors when handling physical tape media, which increases the restore failure rate.

- Access to AWS cloud storage is much faster than accessing an offline tape. Tape lacks agility. You must plan retrieval of tape ahead of time, and there is no transparent on-demand access.

- AWS provides high data durability and built-in DR via local and remote replication for no cost or a small charge, whereas local tape backup requires at least a remote tape copy for DR, which increases costs and tape management complexity.

- AWS is a consumption-based service, which means you no longer need to worry about periodically refreshing backup storage.

- AWS offers low-cost cloud storage options, whereas with tape the management and administration cost often exceed the perceived low media cost.

It is too easy to continue for another year (or two) with tape. You must avoid this traditional pitfall and instead change this status quo to ensure that your backup can continue to support your organization’s initiatives today and tomorrow.

Here are three NetBackup questions that I was asked most during the re:Invent conference:

1. How does NetBackup deliver Global Data Visibility?

Moving your backup images to AWS can be challenging because there is no one source for visibility into what data exists in your organization. Think about it. How do you identify the critical data among the junk?

Knowing what data you have is always better than not knowing.

Veritas Information Map and NetBackup help you to know your data. As part of the daily backup jobs NetBackup collects metadata from the information it protects, including user's files recently created, modified, or deleted, and stores it in the NetBackup master server catalog. With this catalog immediately uploading to the information fabric platform and subsequently visualized in the Information Map, the insights are as recent as the associated backup cadence, and the picture that you are getting about your environment is always up-to-date.

Information Map uses the metadata for maturity modeling so you can model and view the age of your data sets by aggregating created date and last accessed date information. With insight into the timing of data creation, you can see real-time capacity status throughout your entire environment, and you can adjust rapidly to storage resource or activity demands. You can also leverage ownership information to ensure that you adhere privacy regulations as you make the decision to move to the cloud. Trend, ownership and age information available via Information Map enable you to:

- React immediately and accurately to areas that require storage capacity expansion or reduction

- Architect and enforce NetBackup storage-lifecycle-policies that match the data you protect

- Confidently plan your cloud migration projects

Gaining visibility over your data is the first step toward more efficient storage use within your data protection strategy and the key to a successful migration to AWS.

Explore Information Map yourself by completing a simple registration form that will give you direct access to our online demo environment.

2. How does NetBackup integrate with AWS cloud?

Everyone I talked to at re:Invent seemed keen to take advantage of AWS cloud storage for backup. Low cost and the elimination of tape management were two perceived benefits that came up most. However, whether cloud storage is cheaper than tape is a difficult question to answer; a topic for one of my next blog post I guess.

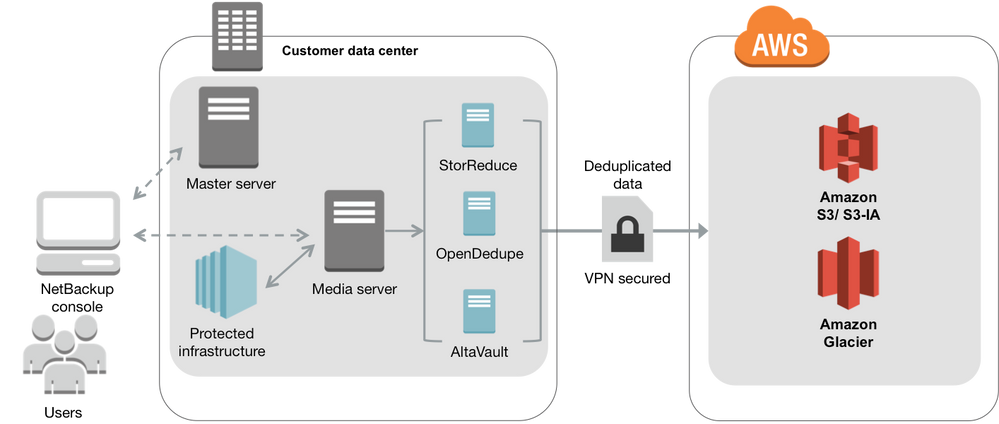

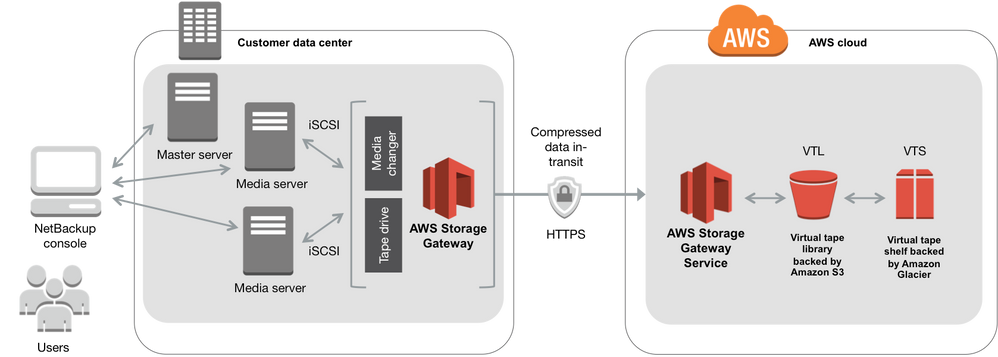

NetBackup can connect to AWS via one of the following ways:

- The NetBackup Cloud Connector

- A Dedupe Gateway Appliance

- The AWS Storage Gateway

Setting up the cloud connector for AWS in NetBackup is easy. The cloud storage configuration wizard guides you through a few simple steps to setup AWS and provision the storage pool. These actions happen entirely in the NetBackup interface, and you don't require any additional NetBackup licenses. Once you completed the setup, NetBackup considers the AWS backup destination to be just like any other disk storage pool with its security, compression and storage lifecycle management functions, which makes is easy to use.

The NetBackup cloud connector offers these benefits:

- Automated storage lifecycle policies to move data to the right storage tiers

- NetBackup Accelerator for faster backups

- Encryption of the data in transit and at rest in AWS

- Data compression to reduce network and storage requirements

- Multi-streaming of data to maximize usage of the available bandwidth (saturation up to 90%)

- Bandwidth throttling to limit bandwidth usage for backups, so other network activity isn't constrained

- Data metering monitoring and reporting

Figure 4 below shows a solution architecture in which the AWS Tape Gateway configuration replaces backup tapes and tape automation equipment with local disk and AWS cloud storage. The NetBackup media server writes the backup images to virtual tapes stored on the Tape Gateway. Virtual tapes can be migrated into Amazon S3 and eventually archived into Amazon Glacier for the lowest cost. You access the backup images through NetBackup, and the backup catalog maintains complete visibility for all your backups and tapes.

The number of mission-critical workloads in the public cloud is said to double in the next two years, which present you new challenges, particularly with regard to data protection.

In cloud projects, I often witness that low priority is placed on backup. It is very easy to push backup to the bottom of the priority list, especially when you are urged to deliver that cloud workload fast and need to battle for each IT dollar. Initially, this practice may not create an immediate problem, but as your cloud workloads become mission-critical, backup becomes equally critical. However, in my experience it is not easy to "bolt on" backup after a workload has been up and running for some time as workloads always become larger and more complex. Therefore, I believe that your day-one goal must be for your AWS workloads to experience the same enterprise-level data protection as your local servers and data, and to be able to recover just as quickly.

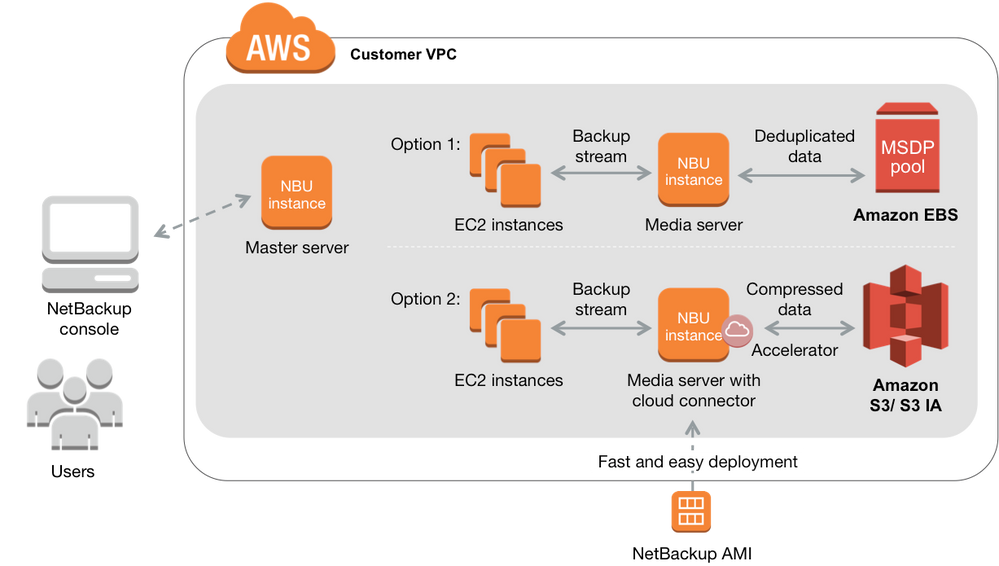

The good news is that NetBackup extends seamlessly to AWS without requiring additional tools or processes. In NetBackup 7.7.3 we made launching a NetBackup virtual server in AWS Elastic Cloud Compute (EC2) easier and faster with the new NetBackup Amazon Machine Image (AMI). An AMI provides all the information required to launch an instance, which in our case is a virtual NetBackup master or media server in EC2.

Figure 5 shows how you can deploy NetBackup in AWS. You can pick from two options or use a combination of both:

- Option 1 uses Amazon Elastic Block Store (EBS) to create a NetBackup Media Server Deduplication Pool (MSDP) to protect your EC2 workloads. Deduplicated backup images are stored in EBS for performance.

- Option 2 uses the cloud connector with NetBackup Accelerator to move your backups images to cheaper S3 cloud storage. Compressed backup images are stored in S3.

The key advantage of this approach is that a single console allows you to manage and govern your backups across all your AWS-based workloads and your local servers with the same backup policies and workflows, which means that you'll have complete visibility and control over your data regardless of the location.

But you can go further.

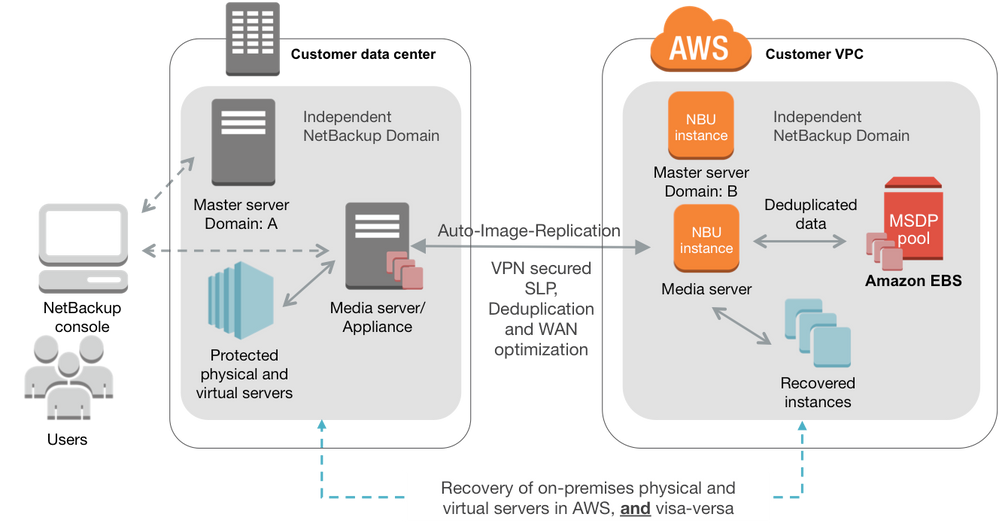

Figure 6 shows how you can leverage the same EC2 infrastructure for your DR strategy.

In this architecture, AWS EC2 is used as the DR destination for your local physical and virtual servers. You need to setup a separate NetBackup domain in AWS with MSDP and use NetBackup Auto-Image-Replication (AIR) to copy your backup images to AWS. The advantage of this approach is that you can recover your local servers in EC2 without the need for an expensive dedicated DR buildout. You can also use this approach to recover your EC2 instances to your local data center or another cloud service provider.

Do you want to test drive NetBackup in AWS yourself? Launching NetBackup MSDP to protect EC2 workloads? Or, Exploring the Cloud Connector and move backup images to S3?

Right now?

Don’t be a dinosaur!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Power-up ransomware resiliency with retention lock/WORM in Protection

- Role-based Access Control (RBAC) in Backup Exec in Protection

- Trust your Backup Image with Backup Exec Malware Scan in Backup Exec

- Accelerator V3 – Faster and more efficient Virtual Machine backups – Powered by ReFS Block Cloning in Protection

- Anomaly Detection in Backup Exec in Backup Exec