- VOX

- Technical Blogs

- Availability

- Docker Persistent Storage: From Laptop to the Clou...

Docker Persistent Storage: From Laptop to the Cloud

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Containers are a hot topic where every vendor wants to be present and where there are a good numbers of incumbents that are trying to get a name in this trend. With higher consolidation rates than Virtual Machines and increased agility, containers will not only create cost savings strategies but also will help companies to bring products faster to market.

Our folks at Veritas Labs are using containers for a good number of projects and that is helping us to understand internally which the needs to run containers with persistent storage are. One of the first things we notice is that it is common that developers will be using their laptops before moving their applications to either on-premise or the cloud. This is interesting when they are developing containers with persistent storage needs. The storage systems at the laptop, on-premise, or could will be quite different. While at the laptop they will be using their local storage, at the data center they may have either hyper-converged infrastructures, storage arrays, even All Flash Arrays for performance sensitive applications and those will also differ of what they will be getting at the cloud.

The request we got is that the storage services should be transparent to the user, no matter where they run. That means that there should be no difference when running the applications in their own laptop or when the applications is later developed within a cloud service. Agility, control and portability are key factors for containers adoption and storage services should follow that model.

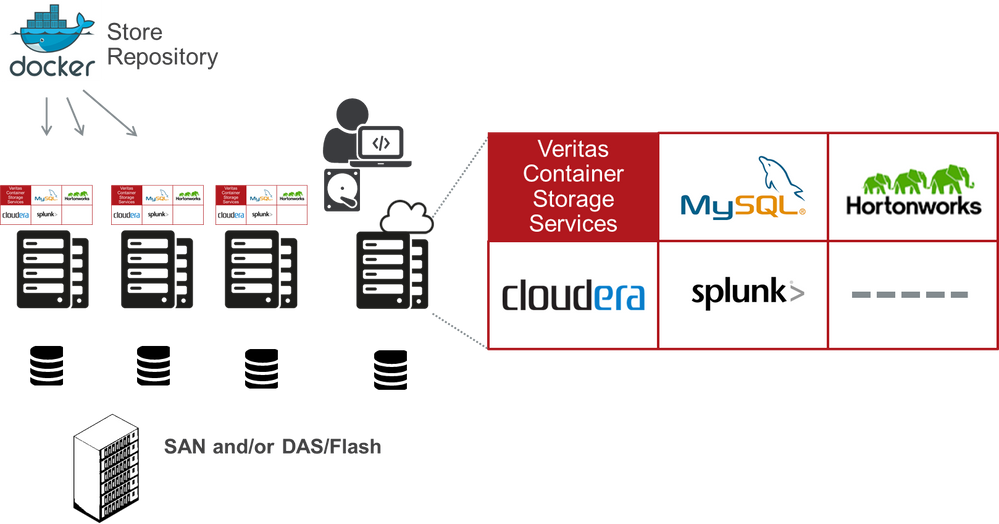

Also, any DevOps guy only want to manage containers, which that really means that even the storage services should be containerized. The image to manage storage services should be downloaded and installed in any Virtual Machine (even those running in a laptop), bare metal or in the cloud.

Aligned with the new capabilities of Docker Swarm, where a cluster is easily created, the storage services for Veritas containers will just be downloaded and run. From that point, all the storage services will be initialized and resiliency will be automatically provided across each container. Once the container with storage services is run, other containers can start using their storage services to provide persistent storage, with all the capabilities of an enterprise storage solution like quality of service, snapshots, etc.

While the look and feel will be the same, each container service will make the best usage of the storage attached to each container running the storage services. For example, users may have a setup running containers on-premise with All-Flash Arrays to take advantages of containers for Big Data and another environment running on Virtual Machines that use just local storage.

The benefits for the customer will be increased agility as there will be a single easy step to use persistent storage services, along with reduction of costs by enabling by software the utilization of any HW technology, either on the form of SAN, DAS or even Flash.

DevOps guys will not have to worry about storage anymore no matter what environment they are using. This is Storage Services aligned to the flexibility and agility provided by Docker.

If you want to know more about our storage services capabilities for containers please reach out to us joining our Containers Group.

Also, I am looking forward to see you at our VISION 2016 Conference, where I will be talking about “Confidently transition to containers: Enable persistent storage for enterprise applications” and you will also have the opportunity to get some hands on at the lab “Data centers in a box via containerized applications (Hands on Lab)”. Looking forward to see you in Las Vegas!

Carlos.-

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Improve Performance and Reduce Costs in the Cloud in Availability

- Zero Day Deployment and Zero Trust Architecture with Veritas NetBackup Flex Appliance 3.0 in Protection

- Enterprise Storage and Data Protection for Red Hat OpenShift in Protection

- Veritas Desktop and Laptop Option 9.8 is now available! in Protection

- Storage Management and Disaster Recovery for Kubernetes made easy in Availability