- VOX

- Data Protection

- Backup Exec

- Storage maximum file size ignored when BE backup s...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Storage maximum file size ignored when BE backup storage is a NFS share

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 03:52 AM - edited 11-23-2022 03:05 AM

Hello everyone.

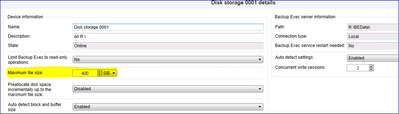

In my attempts to understand why my Backup Jobs are Loading Media every 3 seconds, i gave a look at the Jobs' activities plus the QNAP storage where backups are saved and with my great surprise i noticed that whatever number i put into the "maxium file size" field of the Storage Properties, it's 101% ignored and every backup creates THOUSANDS of .bkf of 2.085.888 KB.

Now, given i perfectly know that the lowest the .bkf files size, the lower chance to have data corruption and supposing i don't care about this and i want the .bkf files to be 100GB each instead of 2GB, is there a way to achieve this?

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 04:18 AM

test it on a local storage with increased file size and see if it works.

if the same setting is not working on Qnap , then it could be some setting on Qnap. Are you able to create a share like you did for BE and then share larger files in that network share

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 04:23 AM

Or mount the volume via ISCSI on BE server, assign a drive letter and create a local storage with increased file size --> see if that works.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 04:49 AM

An additional note - having just tested this using BE 22 by setting the Maximum file size in the prioperties of the storage to 400GB (this appears to be a maximum limit)

clicked Apply to save the change, then ran a backup, which successfully created a 122GB bkf file. Would expect the same behaviour in any version of BE to be honest. Consequently I'm suspecting the QNAP having an effect, so would advise to follow Gurvinder's steps above.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 05:08 AM

I can give it a try tomorrow and let you know, but in the IMG1234567890 backup folders in the QNAP i see 50+ GB files. If there's something on QNAP preventing files larger than 2GB to be created, shouldn't this apply to everything?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2022 11:25 PM

does not look like something on BE side, that is why test on a local drive.

If you have a backup folder on Qnap where larger files i.e. bkf show up then may be try creating a new share and test on that and see how it goes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-17-2022 12:42 AM - edited 11-17-2022 04:13 AM

Ok. It seems there's something "strange" with the QNAP. I tried to backup a 15GB pst residing in our NetApp into the local disk where BE server is installed and it took 12 minutes, creating a 15GB .bkf file after the backup.

I then ran the same exact job vs the QNAP and it's still running after 24 minutes. It's also actually using 5 media of 2GB each instead of continuing to fill one only.

Edit: it took 90 minutes to complete XD

Now the next question is: why the QNAP is doing this? It's no less and no more than a NFS folder shared in the QNAP Storage, i didn't recall having configured anything strange apart giving only the IP of the BE server rights to access the share.

Also, when i backup .virtual machines with Backup Exec, there are no issues or so it seems, if i go into the IMG123456 folders i can see files of 70-80+ GB.

Edit 2: i just created another NFS test share in the same QNAP, added it as a Storage location in BE and ran the same backup: it took 12 minutes like the previous test into the local BE server shared folder (but in the "real" Storage share other 3 backups are running at the moment) but the PST is still being split in 8 little 2GB files.

This is really weird and it's giving me a lot of headaches because every backup not involving virtual machines "raw" backups via the perpetual incremental+consolidation jobs take forever, with the jobs being often stuck for minutes with always loading media.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-21-2022 02:43 AM - edited 11-21-2022 05:22 AM

Since nothing has changed and i have no ideas (opened a ticket with QNAP also, let's see if they have some ideas instead), i will try to use the Windows Server Backup APP against the QNAP, to see if it can create files larger than 2GB.

I will let you know the outcome.

For the record, ALL the other entities in my company that are backing up stuff onto a QNAP via Backup Exec have this exact same issue. Still can't understand if it's a BE issue, a QNAP issue or a "bad combo for whatever reason" issue.

Edit: more and more confused. Just to test, i installed Windows Server Backup Feature onto another server and i tried to backup a 11GB PST into the QNAP folder: a 11GB file has been regularly created into the QNAP folder. I also copypasted the same PST from the Windows Server to the QNAP folder via basic Windows copy-paste and the 11GB file has been regularly copied. So i HAVE to assume that the QNAP share DOES accept files larger than 2GB and whatever is causing BE backups related to file and folders onto the QNAP to be split in little 2GB files, is SURELY related to BE at least to an extent, since whatever other operation into that folder involving files larger than 2GB do work.

Any suggestion?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-23-2022 12:26 AM - edited 11-23-2022 03:03 AM

I am starting to think it’s something related to how BE behaves with network shares being backed up to non-Windows disk storage not directly attached to the server where BE is (the QNAP is simply attached to our network). Given the same shared folder into the QNAP:

- copypaste of large files works.

- Backing up virtual machines with BE works.

- Backing up the same files via Windows Server Backup APP works.

It’s quite obvious to me that “something” is telling BE to not create files larger than 2GB when the jobs do include single folders and files (being them on Windows or on our NetApp there’s no difference) on the QNAP share. Since every other type of backup is working and properly create large size files, it definitely cannot be a QNAP only issue. It has to come also from within BE itself.

Again, any suggestion?

Edit: in the meantime i opened a ticket with QNAP and they pointed me to an interesting link: Linux NFS faq (sourceforge.net).

Taken from the first chapter:

A1. What are the primary differences between NFS Versions 2 and 3?

A. From the system point of view, the primary differences are these:

Version 2 clients can access only the lowest 2GB of a file (signed 32 bit offset). Version 3 clients support larger files (up to 64 bit offsets). Maximum file size depends on the NFS server's local file systems.

NFS Version 2 limits the maximum size of an on-the-wire NFS read or write operation to 8KB (8192 bytes). NFS Version 3 over UDP theoretically supports up to 56KB (the maximum size of a UDP datagram is 64KB, so with room for the NFS, RPC, and UDP headers, the largest on-the-wire NFS read or write size for NFS over UDP is around 60KB). For NFS Version 3 over TCP, the limit depends on the implementation. Most implementations don't support more than 32KB.

NFS Version 3 introduces the concept of Weak Cache Consistency. Weak Cache Consistency helps NFS Version 3 clients more quickly detect changes to files that are modified by other clients. This is done by returning extra attribute information in a server's reply to a read or write operation. A client can use this information to decide whether its data and attribute caches are stale.

Version 2 clients interpret a file's mode bits themselves to determine whether a user has access to a file. Version 3 clients can use a new operation (called ACCESS) to ask the server to decide access rights. This allows a client that doesn't support Access Control Lists to interact correctly with a server that does.

NFS Version 2 requires that a server must save all the data in a write operation to disk before it replies to a client that the write operation has completed. This can be expensive because it breaks write requests into small chunks (8KB or less) that must each be written to disk before the next chunk can be written. Disks work best when they can write large amounts of data all at once.

NFS Version 3 introduces the concept of "safe asynchronous writes." A Version 3 client can specify that the server is allowed to reply before it has saved the requested data to disk, permitting the server to gather small NFS write operations into a single efficient disk write operation. A Version 3 client can also specify that the data must be written to disk before the server replies, just like a Version 2 write. The client specifies the type of write by setting the stable_how field in the arguments of each write operation to UNSTABLE to request a safe asynchronous write, and FILE_SYNC for an NFS Version 2 style write.

Servers indicate whether the requested data is permanently stored by setting a corresponding field in the response to each NFS write operation. A server can respond to an UNSTABLE write request with an UNSTABLE reply or a FILE_SYNC reply, depending on whether or not the requested data resides on permanent storage yet. An NFS protocol-compliant server must respond to a FILE_SYNC request only with a FILE_SYNC reply.

Clients ensure that data that was written using a safe asynchronous write has been written onto permanent storage using a new operation available in Version 3 called a COMMIT. Servers do not send a response to a COMMIT operation until all data specified in the request has been written to permanent storage. NFS Version 3 clients must protect buffered data that has been written using a safe asynchronous write but not yet committed. If a server reboots before a client has sent an appropriate COMMIT, the server can reply to the eventual COMMIT request in a way that forces the client to resend the original write operation. Version 3 clients use COMMIT operations when flushing safe asynchronous writes to the server during a close(2) or fsync(2) system call, or when encountering memory pressure.

For more information on the NFS Version 3 protocol, read RFC 1813.

Now, i don't remember if i already said it, but the storage destination folder is a NFS share. Do you know by chance what NFS version Backup Exec uses to talk to NFS shares (WITHOUT using the BE client for Linux of course)? Because if it's version 2, maybe it's possible to force Version 3 and see if something changes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-11-2023 12:35 AM

There is a similar case reported in BE support recently and we created a binary for it. Can you msg me the Veritas case number if you logged one and we can reach out to you.

- How to configure wasabi WORM storage in NetBackup

- Array based snapshot - Browse and restore VMs from HUA Dorado LUNs snapshots in NetBackup

- Manually copying .bkf files in Backup Exec

- Get-BEBackupDefinition not showing all results in Backup Exec

- Back up to Local Disk Storage and then Duplicate to Cloud Deduplication Storage in Backup Exec