- VOX

- Technical Blogs

- Veritas Perspectives

- High availability: Optimal-provisioning, not over-...

High availability: Optimal-provisioning, not over-provisioning

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Once upon a time (I’ve always wanted to start an article with that!) I worked for a company that developed and sold a fault-tolerant computer system. Interestingly, Veritas Technologies—then Tolerant Systems—was one of our competitors.[1] The particular architecture at hand was based on highly redundant hardware, with a minimum of four identical copies of each component (CPUs, memory, I/O controllers etc.) in the system—all doing the same thing at the same time. The idea came from the U.S. space program: Apollo mission computers employed an architecture where three CPUs were running in lockstep. The results after every computation were “voted” on, and the majority won.

The idea was 100% uptime. Today, we call that a Recovery Time Objective (RTO) of zero.

Understanding the unnecessary

The business model of this particular company was to find customers with commercial applications that required 100% uptime and were willing to pay for it—for example, the U.S. air traffic control system. Needless to say, there weren’t many of those customers. One main reason: Customers were paying for maximum protection of alltheir applications, even if they didn’t require an RTO of zero.

Today, we call that over-provisioning—and over-provisioning costs money.

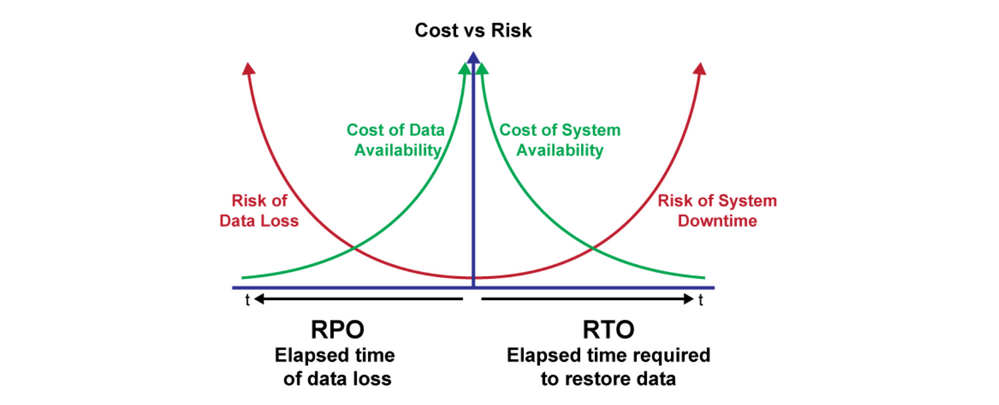

Of course, this is an extreme example of the highest (that is, most expensive) point on the classic “RPO/RTO Cost/Risk” curve (see below). Naturally, we’d like to have an RTO of zero for allour applications, but as we mentioned, it’s just not practical.

The workaround is to tailor availability protection to the importance of the application. Traditional IT models cater to resiliency and performance at the infrastructure layer (networking, storage, compute). But these components aren’t application-aware, meaning there’s no intelligence in the infrastructure to ensure applications are running in a state that meets the service levels required to run the business. If the availability components aren’t application-aware, the only alternative is to apply the highest level of protection needed for the most important application across all applications. Again, that’s over-provisioning.

The difference in Veritas InfoScale

Veritas InfoScale is a software-defined infrastructure that is application-aware and provides high availability and disaster recovery for critical business services—including databases, customer applications and multi-tiered services. It delivers a common availability platform across physical, virtual and all major public cloud platforms, providing the flexibility to optimize availability according to cost and control requirements.

Awareness and action with InfoScale

Importantly, the InfoScale recovery process can apply to multi-tiered services where a failure at any tier can bring down the entire business service. InfoScale is aware of the complete business service and takes action in the event of a failure to restore the entire service. When an individual component fails, InfoScale automatically orchestrates the connection to other computing resources, on-site or across sites, providing faster recovery and minimal downtime—with no manual intervention.

With non-disruptive recovery testing

InfoScale also has a compelling tool that performs non-disruptive recovery testing, called Fire Drill. Fire Drill lets an IT organization simulate disaster recovery tests by starting up a business service at the disaster recovery site as it would in an actual disaster. Because it is a simulation, Fire Drill allows organizations to test recovery readiness with minimal disruption to production environments.

Veritas InfoScale: the answer to over-provisioning

Why purchase four sets of enterprise infrastructure to achieve high availability? And why over-provision by replicating portions of your infrastructure that are not application-aware? Veritas InfoScale is a software-defined infrastructure providing application-aware availability on all leading operating systems and platforms, including Windows®, Linux®, Cisco® UCS Servers, VMware® ESX®, Red Hat® Enterprise Virtualization, Oracle® VM and Microsoft Hyper-V®.

Today, over 7,500 customers (managing over 6,000 petabytes of data) rely on Veritas InfoScale to keep their businesses running.[2] That’s not over-provisioning, that’s optimal-provisioning.

[1]https://en.wikipedia.org/wiki/Veritas_Technologies

[2]Veritas internal customer list

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Understand, Plan and Rehearse Ransomware Resilience series - Design to Recover in Protection

- Understand, Plan and Rehearse Ransomware Resilience series - Access and Improve in Protection

- Understand, Plan and Rehearse Ransomware Resilience series - Strategy in Protection

- Improve Performance and Reduce Costs in the Cloud in Availability

- Resiliency and Data Mobility in AWS with Veritas Alta™ Application Resiliency in Availability